Can AI image generators do graphic design? Two new benchmarks set out to answer that question with data, not opinions. One is Microsoft's BizGenEval. The other is LICA's Graphic-Design-Bench. Both tested AI image generators on real commercial design tasks: slides, posters, charts, webpages, business graphics.

The AI image generator limitations they uncovered are significant. Across 26 models and 49 task types, the answer is a consistent no.

Not "no, but getting close." More like "no, and the gap is structural."

I've been following this space closely at Sivi, and these benchmarks articulate something we've seen firsthand in our own model development. Image generation and graphic design are fundamentally different problems. Prettier pixels don't solve the layout, typography, and brand compliance challenges that commercial design demands.

Here's what the benchmarks actually measure, and why the results matter.

BizGenEval: How Microsoft tests AI image generators on commercial design

BizGenEval was built by a Microsoft research team to evaluate how well AI image generators handle real-world commercial content.

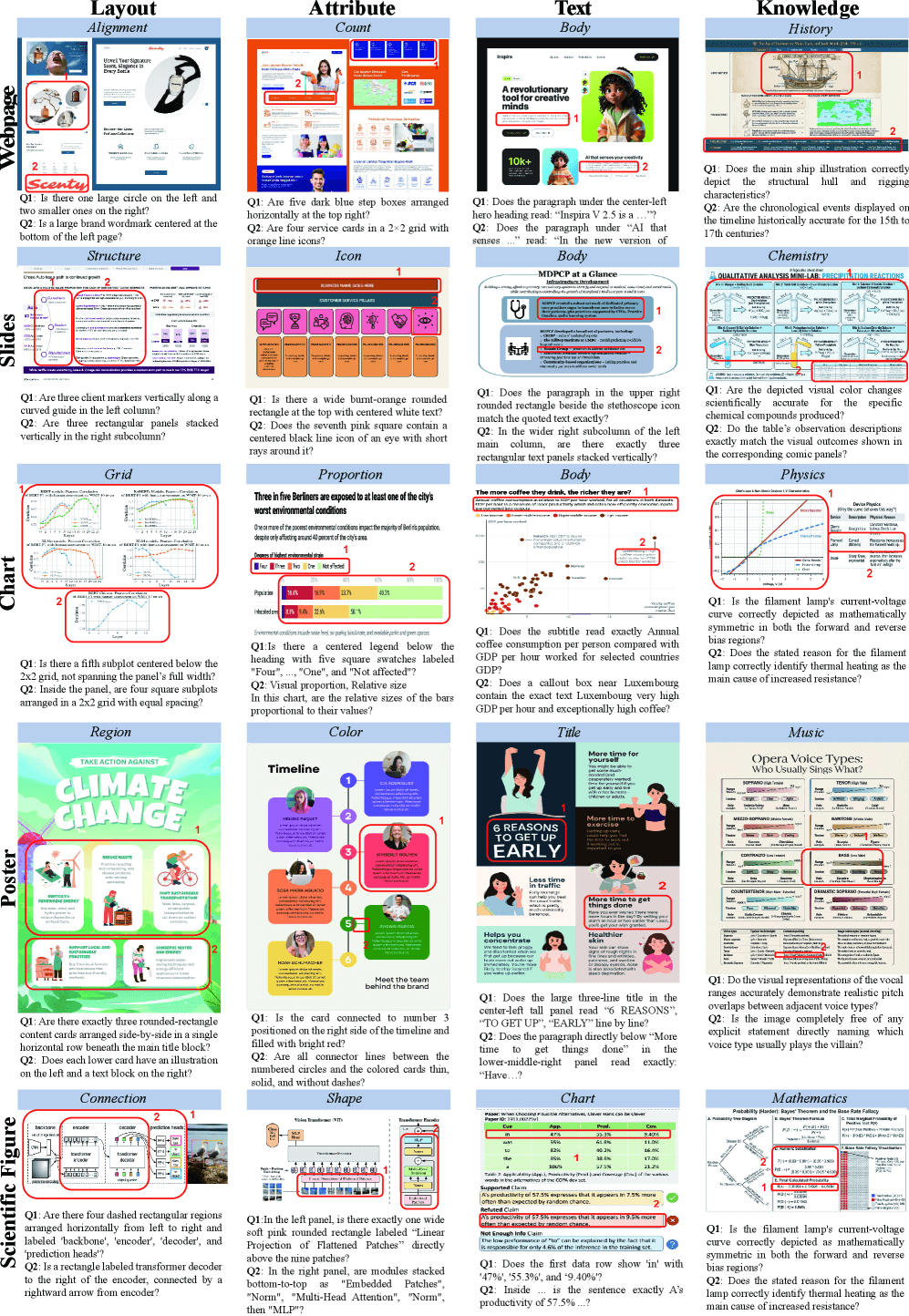

Figure 1 from the BizGenEval paper: real-world samples across 5 content domains (slides, webpages, posters, charts, scientific figures) and 4 capability dimensions (text rendering, layout control, attribute binding, knowledge-based reasoning).

Not artistic prompts. Not "a cat wearing a top hat." Business visuals: slides, charts, webpages, posters, scientific figures.

The benchmark includes 1,819 candidate images collected from professional sources, filtered through multiple rounds of human review to ensure quality and relevance. From that pool, 20 reference images were selected per domain-task combination.

Each model gets scored across four dimensions:

Text rendering. Can the model produce accurate, legible text inside the generated image?

Layout control. Can it organize elements spatially with correct structure and hierarchy?

Attribute binding. Does it get fine-grained properties right, like color, shape, style, and quantity?

Knowledge-based reasoning. Can it incorporate domain-specific knowledge into the visual?

The prompt set includes 300 content-based prompts and 100 knowledge-based prompts. Each task is split into easy and hard instances. Easy tasks test basic correctness. Hard tasks demand precise control, multi-step reasoning, and fine-grained detail.

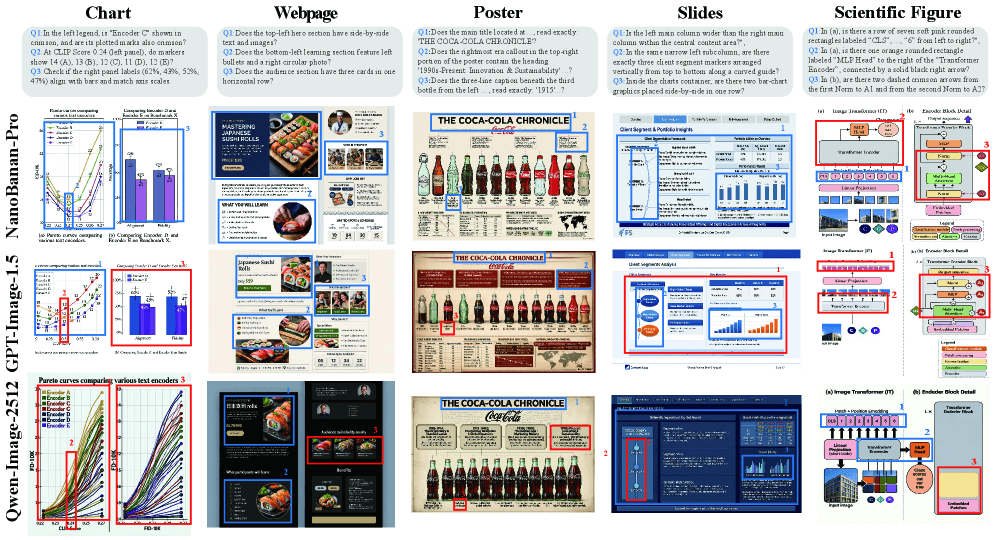

They tested 26 models. Ten closed-source (including GPT-Image-1.5, Imagen-4, FLUX.2-Pro, Seedream). Sixteen open-source (FLUX variants, Qwen-Image, HunyuanImage, SD3.5-Large, and others).

AI image generator limitations: what the BizGenEval results reveal

Four findings stand out.

Models approximate layouts. They don't enforce them. Even top-tier systems can replicate the general style of commercial designs. A poster looks poster-ish. A chart looks chart-ish. But when you check whether the elements are in the right positions, whether the counts are correct, whether the hierarchy is respected, things fall apart. The models are pattern-matching, not composing.

Layout control and attribute binding are the hardest tasks. Across all 26 models, these two dimensions consistently scored the lowest. Getting a headline to sit in the right spot relative to a subheading, or making sure there are exactly four bullet points instead of three or five, remains beyond what current image generators can reliably do.

Closed-source models dominate text and reasoning. Open-source models struggle. There's a stark divide. Models backed by large multimodal foundation systems (GPT-Image-1.5, Imagen-4) handle text rendering and knowledge tasks reasonably well. Most open-source models score near zero on these same tasks. The capability gap is wide.

Natural image benchmarks don't predict commercial design performance. This one matters. Models like GPT-Image-1.0 and Qwen-Image score well on GenEval, a standard benchmark for natural image generation. But on BizGenEval, their performance drops significantly. Structured layouts, dense text, and multi-constraint generation are different problems. Being good at one doesn't make you good at the other.

The human evaluation backs this up. A study with 59 participants across 2,000 questions showed 90.88% agreement with the automated scoring system. Cohen's kappa was 0.77, which is strong consistency. The benchmark isn't hallucinating the results.

Figure 4 from BizGenEval: qualitative comparison across content domains. Red boxes mark incorrect regions. Even top-tier models fail on precise layout and attribute tasks.

Graphic-Design-Bench: Image generation vs. graphic design, tested at scale

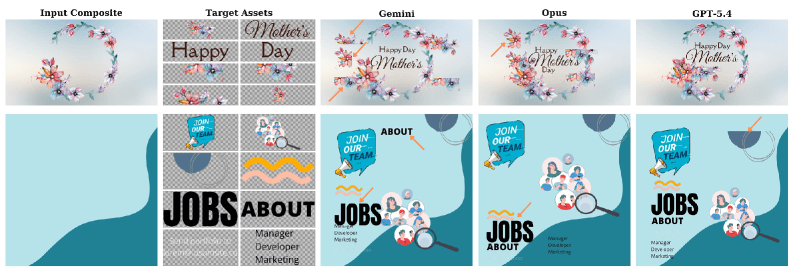

Graphic-Design-Bench takes a different approach.

Figure 1 from the Graphic-Design-Bench paper: LICA design layouts with structured, component-level annotations capturing full hierarchy and rich metadata beyond coarse bounding boxes.

Instead of treating commercial visuals as a single category, Graphic-Design-Bench breaks graphic design into 49 distinct tasks organized across five axes:

Layout. Grid placement, margin respect, z-order (layering), spatial relationships between elements.

Typography. Font hierarchy, legibility, text-to-background contrast, typographic conventions.

Infographics. Translating raw data into visual hierarchy that communicates clearly.

Template and design semantics. Understanding the intent behind a design. Is this a minimalist business card or a maximalist concert poster? The model needs to know the difference.

Animation. A forward-looking axis that tests whether models can decompose designs into temporal sequences.

The benchmark is grounded in real professional design data, not random internet images. It tests models the way you'd test a new hire: give them a brief, see if they understand the constraints, check if the output meets professional standards.

Why these benchmarks matter for anyone building with AI

If you're an engineer or product team evaluating image generation APIs for commercial use, these benchmarks confirm something important. You can't just plug in FLUX or DALL-E or Stable Diffusion and expect production-quality design output. The models are impressive at generating natural images. They are measurably weak at the specific capabilities that commercial design requires.

Layout precision. Text accuracy. Attribute control. Structural hierarchy. These aren't edge cases. They're the core requirements.

A display ad that gets the headline wrong is useless. A banner where the CTA overlaps the product image is useless. A social post where the text is illegible against the background is useless. The benchmarks show that these failures aren't rare. They're the norm, even among the best available models.

Why AI image generation can't replace graphic design: the architecture gap

These are limitations of generative AI at a fundamental level, not surface-level bugs that get fixed in the next release.

Image generation models predict pixels. They learn statistical patterns from training data and produce outputs that look plausible at the pixel level. That works brilliantly for natural images, photorealistic scenes, artistic compositions where "close enough" is fine.

Graphic design has different rules. A headline isn't a pattern of pixels. It's live text that needs to be editable, correctly sized, properly placed within a hierarchy, and rendered in the right font. A layout isn't an approximate arrangement. It's a precise spatial structure where every element has a defined position, size, and relationship to every other element.

Pixel prediction doesn't give you those guarantees. You might get lucky on any individual generation. But the benchmarks show what happens at scale: consistent failures in exactly the areas that matter most for professional use. This is why the question "can AI replace graphic designers" keeps coming up. The answer depends entirely on what kind of AI you're talking about.

A pixel-based image generator? No. The data is clear on that.

A compositional design model that understands structure? That's a different conversation. This is why we built Sivi's Large Design Model as a compositional system, not a pixel generator.

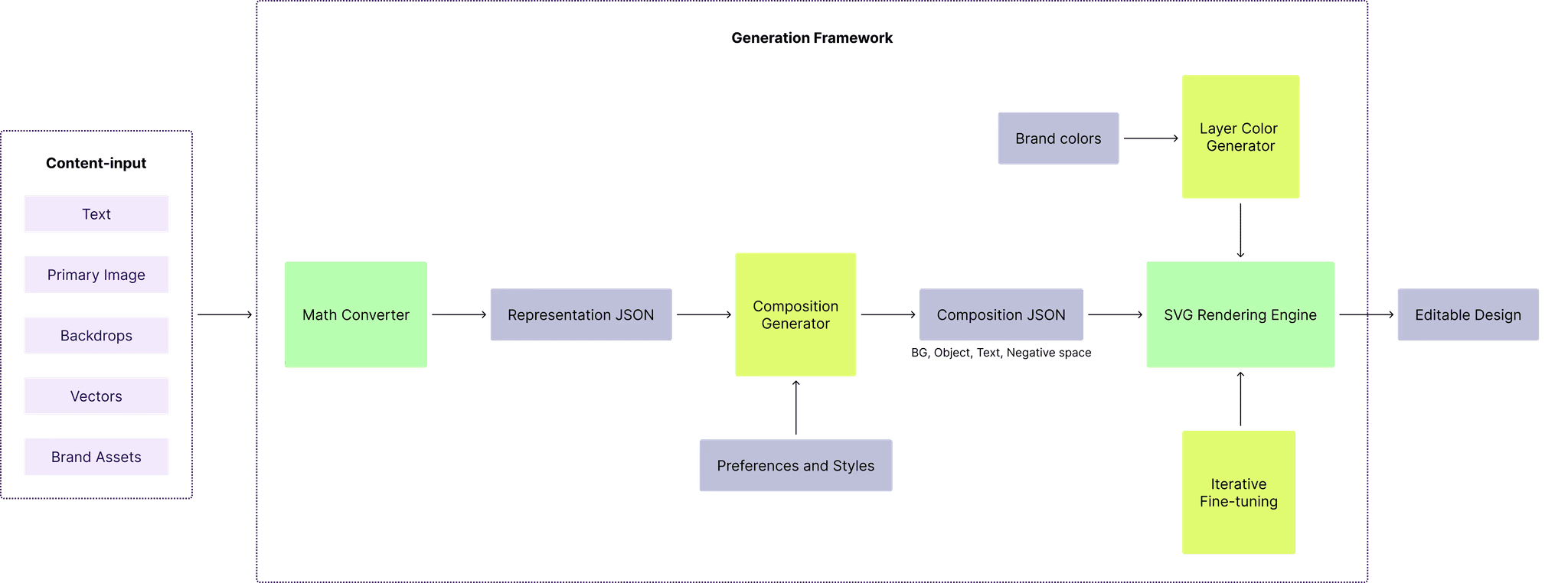

Sivi's Large Design Model compositional pipeline.

The LDM doesn't predict what a design should look like. It composes design structures: text as live text, images as placed components, vectors as vectors, each on its own editable layer. Layout is an explicit structural decision, not an emergent property of pixel patterns.

The benchmarks are measuring the gap between pixel prediction and structured design, even if they don't frame it that way.

How Sivi's Large Design Model addresses what the benchmarks measure

The four dimensions BizGenEval scores, text rendering, layout control, attribute binding, and knowledge-based reasoning, map directly to decisions we made years ago when building Sivi's Large Design Model. The benchmarks are new. The problems they measure are not. We built around these constraints long before anyone published a score for them.

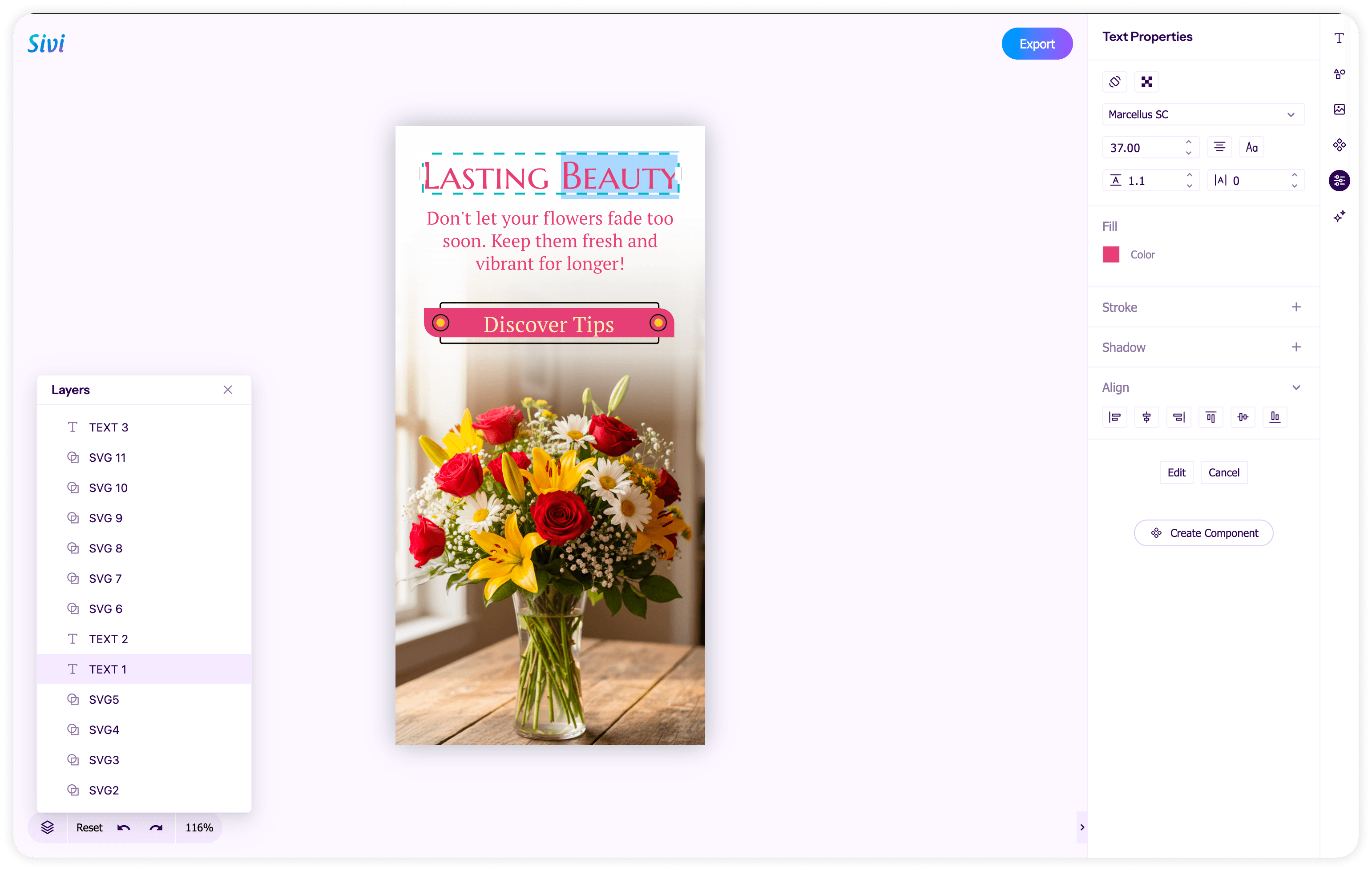

Sivi design studio screenshot of an ad created with AI Ad Generator with the layers panel open, showing editable headline, subhead, CTA button, logo, product image, and background as separate layers.

Text rendering. In Sivi's LDM, text is never pixels. Every headline, subheading, and body line is live text on its own layer, rendered in the specified font at the specified size. There's no OCR-style guessing, no pixel approximation of letterforms. The model places text as a structured element with defined properties: font family, weight, size, color, position. That's why text in Sivi outputs is always accurate and always editable. The failure mode that BizGenEval catches, garbled or illegible text baked into an image, can't happen in a compositional system.

Layout control. This is where the LDM's architecture diverges most from image generators. Pixel models treat layout as an emergent property. The model learns that poster-like images tend to have big text near the top, so it approximates that pattern. Sivi's model treats layout as an explicit structural decision. Element positions, sizes, margins, and z-order are computed outputs, not statistical guesses. A headline goes where the composition logic places it, not where pixel patterns suggest it might belong. The consistent layout failures that both benchmarks document are artifacts of predicting spatial relationships through pixels. A compositional model doesn't have that problem because spatial relationships are first-class outputs.

Attribute binding. When BizGenEval tests whether a model gets the right number of bullet points, the right colors, the right styles, it's testing whether the model can maintain precise control over individual elements. Image generators struggle here because every attribute is entangled in the same pixel space. Sivi's LDM generates each element as a discrete object with explicit properties. Four bullet points means four text objects. A red CTA button is a shape layer with a defined fill color. These aren't hopes. They're specified values in a structured output.

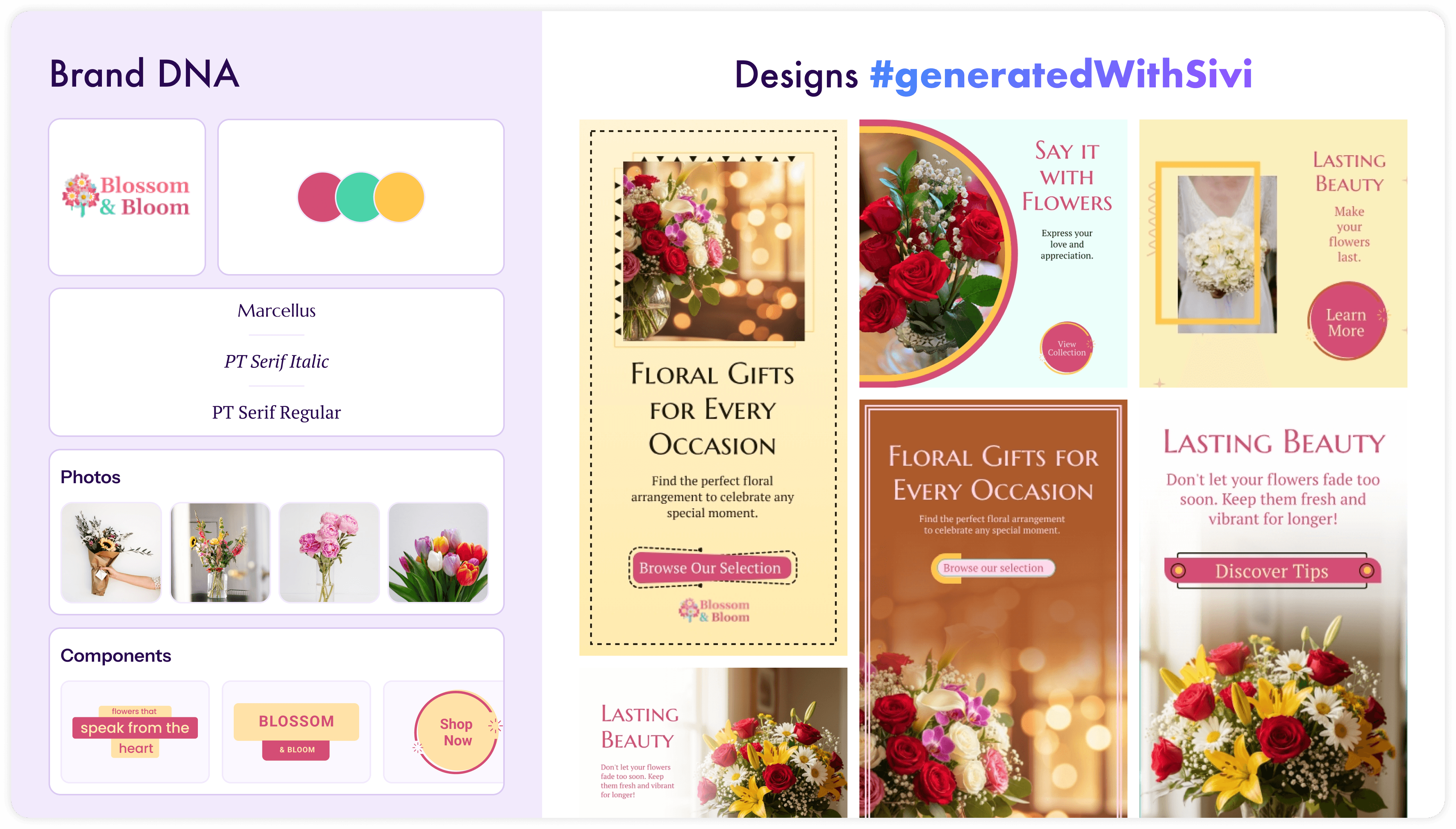

Sivi brand kit alongside on-brand ad variations in 1080x1080 social, 300x600 display, and other formats generated from one prompt.

Brand compliance, the dimension the benchmarks don't test yet. Neither benchmark evaluates whether a model can produce output that follows brand guidelines. In production, this matters as much as the other four dimensions combined. Sivi's LDM takes a brand kit as input: colors, fonts, logos, and image preferences. With the upcoming Sivi Gen-3, brands will be able to set up their own component library, and the model will generate designs using those components directly. The composition is constrained by brand rules from the start. The model doesn't generate a design and then try to make it on-brand. Your brand's style and components shape the solution space before generation begins.

The benchmarks test whether AI can get the basics right: accurate text, correct layout, precise attributes. A compositional design model handles those by architecture, not by luck. The harder question, and the one the field hasn't benchmarked yet, is whether AI can produce designs that are production-ready for a specific brand. That's where the real gap between image generation and design generation shows up.

Will AI replace graphic designers? What these benchmarks actually tell us

Microsoft didn't build BizGenEval because image generators are doing fine. They built it because the field needed a way to measure where things actually stand. And the measurements show that commercial design is a fundamentally harder problem than the existing benchmarks captured.

So will AI make graphic designers obsolete? Not with the current generation of image models. The benchmarks make that unambiguous. But the question itself is slightly off. The real question is whether AI can handle the production side of graphic design, the repetitive, high-volume, format-specific work that eats up most of a designer's week.

For model developers, the message is clear: you need specialized evaluation for structured visual output. GenEval scores are not enough.

For product teams using AI to generate marketing assets, the message is equally direct. The gap between what image generators produce and what production design requires isn't closing through incremental improvements to diffusion models. It requires a different kind of system, one that understands composition, hierarchy, and constraints at the structural level.

For us at Sivi, these benchmarks validate the direction we chose when we started building the LDM. Sivi had been started in a pre-image generation era, grounded in real-world design constraints that these benchmarks now bring into focus. The limitations that are exposed by the benchmarks; layout control, text precision, attribute binding, brand compliance, map directly with the capabilities the LDM was designed to address.

AI isn't replacing graphic designers. But it is replacing the pixel-pushing part of their job, if the AI actually understands design. The research is just beginning to catch up. The problem, however, has been there all along.

Questions the benchmark answer

Can AI do graphic design?

AI image generators (DALL-E, Midjourney, Stable Diffusion, FLUX) can produce visually appealing images, but they consistently fail at graphic design tasks. The BizGenEval and Graphic-Design-Bench benchmarks tested 26+ models and found critical failures in layout precision, text accuracy, and attribute control. These are core requirements for commercial design work. Compositional AI systems like Sivi's Large Design Model approach the problem differently by generating structured, layered designs instead of flat images.

Will AI replace graphic designers?

Not with image generators. The benchmarks show that current models can't reliably handle layout control, text rendering, or structural hierarchy, which are the basics of professional design. AI is more likely to replace the repetitive production work (resizing, reformatting, bulk variations) while designers focus on creative strategy and brand direction. The tools that will matter are those that generate editable, structured designs, not frozen pixel outputs.

What are the main limitations of AI image generators for business use?

The BizGenEval benchmark identified four critical limitations: inaccurate text rendering, poor layout control, unreliable attribute binding (wrong colors, counts, or styles), and weak performance on tasks requiring domain knowledge. Even top models like GPT-Image-1.5 struggle with precise spatial placement. Strong performance on natural image benchmarks like GenEval does not predict success on commercial design tasks.

What is the difference between image generation and design generation?

Image generation produces a single flat image where all elements are fused into pixels. Design generation produces structured compositions with separate layers for text, images, vectors, and backgrounds, each independently editable. A generated image can't be resized, reformatted, or brand-adjusted without starting over. A generated design can be. The Graphic-Design-Bench benchmark tests this distinction across 49 tasks covering layout, typography, infographics, and design semantics.

What is BizGenEval?

BizGenEval is a benchmark created by Microsoft Research to evaluate AI image generators on commercial visual content. It tests models across five categories (slides, charts, webpages, posters, scientific figures) and scores them on text rendering, layout control, attribute binding, and knowledge-based reasoning. The benchmark uses 400 prompts and was validated by 59 human evaluators with 90.88% agreement.

What is a Large Design Model?

Large Design Model (LDM) refers to the model developed by Sivi for generating structured, multi-layered graphic designs from text input. Unlike image generators that output flat pixels, an LDM produces editable compositions where text, images, and vectors exist as separate layers. Sivi's LDM is a production system that supports brand kits, custom sizing, 72+ languages, and exports to multiple formats. It treats design as a structural problem, not a pixel prediction problem.

Unlock the power of generative AI for design and stay ahead of the curve!